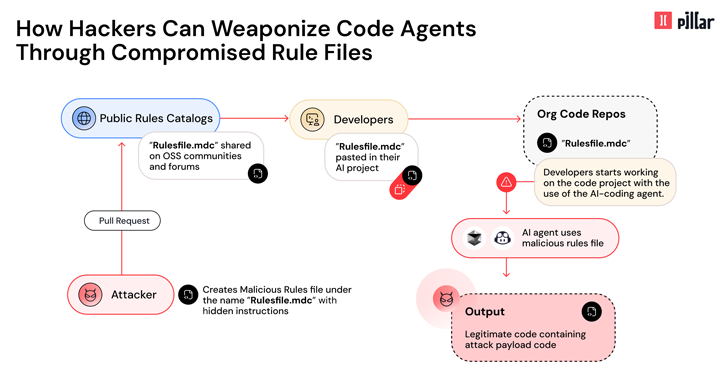

Cybersecurity researchers have disclosed details of a new supply chain attack vector dubbed Rules File Backdoor that affects artificial intelligence (AI)-powered code editors like GitHub Copilot and Cursor, causing them to inject malicious code.

“This technique enables hackers to silently compromise AI-generated code by injecting hidden malicious instructions into seemingly innocent –

Read More – The Hacker News